F1 TV and F1 TV Pro, the OTT platform from Formula 1, first launched in 2018 bringing the sport to fans around the world in full HD, with localised commentary, and multiple camera feeds.

Subscribers watch the world feed, including graphics and audio, as broadcast by the likes of Sky and ESPN, but with Formula 1’s own presentation team commentating.

The initial platform was replaced in 2020 with a focus on reliability and quality, James Bradshaw, head of digital technology at Formula 1 tells TVBEurope.

“The platform and Formula 1, in general, have seen massive growth since,” he adds. “As our subscriber numbers have grown year on year we’ve entered into new markets. It’s available in over 140 markets as a VoD service. We’ve also launched our complimentary second screen live timing service which is available in the widest possible territories we can offer.

“The F1 TV Pro subscription is available in over 80 territories offering live coverage of the entire weekend and is effectively the most complete coverage you can get anywhere.”

Formula 1 worked with Amazon Web Services (AWS) on the re-architecture of F1 TV’s platform, which was hosted by AWS but at the time was not the company’s responsibility. “They lent in very hard, giving us wraparound support, and really just a gold standard of support,” says Bradshaw.

“We worked together to stabilise that platform, and they helped inform the choices that we made in terms of vendor and technology selection and architecture for the new platform.”

The new version of F1 TV is 100 per cent delivered on AWS from the point that it leaves F1’s Media and Technology Centre in London. The feed is delivered via AWS Direct Connect, with F1 utilising the likes of Media Live and Media Package for its workflows, and Media Convert for VoD content.

“If you’re watching F1 TV on a Fire TV at home then it’s literally glass to glass,” adds Bradshaw.

AI and subtitles

Formula 1 is also utilising AWS for the live captioning of all races on F1 TV and F1 TV Pro.

In the first iteration of the platform, the company used human captioners who would listen to the main commentary and then re-speak or type the text for viewers to see on screen.

“It’s a highly intensive job and they can only do it for short periods of time, therefore you have to have a team of closed captioners working on a rotating basis,” explains Bradshaw. “Formula 1 runs all day and there are many hours of content, so one of the innovations that we brought in when we rebuilt F1 TV was to look at how we could make that sustainable and scalable.

“We want to be able to offer closed captions, which is fundamentally an accessibility feature, in more and more territories in more and more languages. Therefore we wanted a system that was scalable.”

The answer was to employ an enhanced version of Amazon Transcribe which includes a post-processing layer built with AWS ProServe. This allows Formula 1 to take into account the complex vernacular of the sport. “For example, terms like DRS or the drivers’ names,” explains Bradshaw. “We have many different commentators for each of the different languages. They’ve all got their own pronunciations of different driver names. If you’ve got a Scotsman pronouncing a Frenchman his name might come out slightly differently to an English commentator, or even a Spanish commentator saying that name. There’s lots of nuance to the training, and that’s why something off the shelf wasn’t good enough.”

Formula 1 worked with the machine learning experts at AWS in order to build a rich catalogue of terminology, and also address some of the more challenging aspects of the sport. “It may seem simple but if somebody is in fifth position, that is different to a fifth of a second,” says Bradshaw. “It has to know is it going to say fifth, or is it going to be the number five or is it one-fifth (one slash five), and commentators will use all of these different terminologies and we’ve got to work out from context which set of numbers we should show on the screen.”

Training of the AI took around three months, with Formula 1 initially working on the infrastructure to get it up and running and then using the post-processing for corrections. “We had machine learning experts, actually a team from AWS, who were native language speakers and people who have context of the sport as well,” continues Bradshaw. “The data scientists built the training, and it’s in a steady state. We now have a different set of people, not data scientists, who are just doing the regular tuning and training in between every race weekend.”

Subtitling hasn’t gone totally AI. Humans are still part of the loop, tasked with looking for any rogue words or statements that may appear over a race weekend. “That will always happen,” states Bradshaw. “It’s their job to make sure that what goes out to a viewer who might be really dependent on closed captions to understand the context isn’t confusing.

“The feedback that we’ve heard from viewers who have an audio impairment is that it’s more important for it to be accurate than it is to be fully complete,” he adds. “We will deny an entire sentence if it doesn’t add value to that user or makes it harder to follow what’s going on.”

Personalisation

The idea of personalisation is something that Formula 1 is keen to explore further, particularly in terms of making the sport accessible to a wider range of viewers. “We want to make it more understandable for people from a broader background, who maybe have less Formula 1 expertise,” explains Bradshaw. “We’re looking at ways that we can make the content, whether that’s the graphics or commentary, designed for somebody who is entry-level in their understanding of the sport, rather than being thrown in the deep end with the expectation that they have years of experience of watching F1.”

One of the first instances of that plan will be the Hungarian Grand Prix on 23rd July. Formula 1 and Sky are working together to produce a special broadcast aimed at children in the UK and Germany.

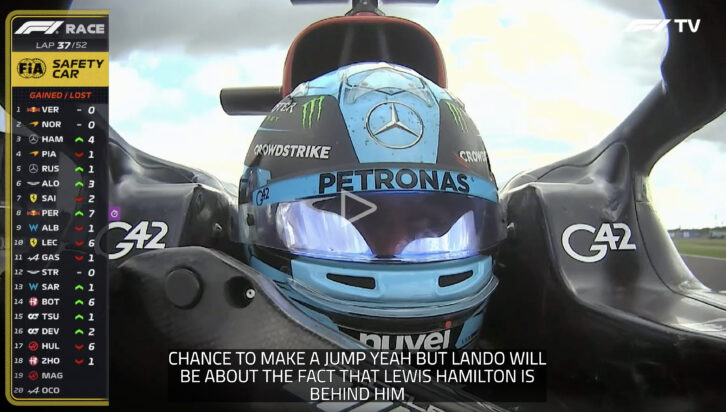

Formula 1 will create a dedicated international feed, including bespoke graphics, sound effects and special features, including 3D augmented graphics on specific camera angles.

“We ran a really exciting workshop in Biggin Hill with a group of high school students of different ages to understand what it was that they would be interested in and looking for. We’re really pleased that some of the ideas that came out of that day are being implemented. I’ve seen some previews of it and it’s really exciting content,” says Bradshaw.