The decision to implement a Time Addressable Media Store (TAMS) API in conjunction with Techex’s tx Darwin modular software platform in a debut live case at OnAir 2025 underlined the extent to which, in only a couple of years, the concept has established itself at the cutting edge of broadcast. Yet it’s arguable that, in general, the extent to which it diverges from existing hybrid and cloud storage models is not yet widely understood.

TAMS, which was open-sourced by BBC R&D in 2023, received its public debut at IBC2024 with a demo on the stand of early enthusiast Amazon Web Services (AWS). Since then, the BBC has continued to work with AWS, “building the TAMS community to add more partners and end-users through a programme of AWS Cloud Native Agile Production partner enablement sessions on both sides of the Atlantic.”

Chris Swan, a principal solutions architect specialising in content production at AWS, recalls that the company’s awareness of TAMS emerged from a “critical inflection point in 2023” when it was confronting fundamental challenges with customers’ cloud migration trajectories: “What became immediately clear in our conversations with customers like the BBC was that traditional ‘lift and shift’ approaches weren’t going to deliver the transformational benefits our customers needed.”

When AWS became aware that BBC R&D had published the TAMS specification as open source, adds Swan, it was a “eureka moment. Here was a cloud-native approach that could fundamentally reimagine how media workflows operate, moving from file-based to content-centric architectures. The application potential was immediately apparent: this wasn’t just about storage, it was about enabling true interoperability and breaking down the vendor silos that have constrained our industry for decades.”

Chunked media

In a nutshell, TAMS enables media storage in chunked form, facilitating the transfer of only the section of content required at any one time. Swan explains: “At its heart, TAMS uses standard object storage to hold the media as small chunked segments with timing and identity as key primitives. This removes the need for high-performance file systems and duplication between the edit file storage system and the archive object storage, all of which drives cost savings. Being software-driven, it also allows for faster resource deployment times compared to traditional workflows and the ability to scale event-driven workflows up or down in minutes.”

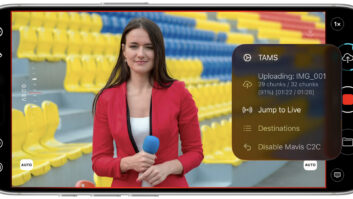

David Mitchinson, solutions director of Techex, highlights some of the other benefits offered by the API: “The TAMS timeline means that media sections are indexed uniquely and synchronisation between essences is retained, making it possible to store video and audio components separately. Once stored, the original asset is never changed (it is ‘immutable’) but can be registered multiple times in metadata to efficiently implement a range of functions from editing to time delays. Multiple operators can be working on the same content at the same time, and with the scalability of cloud it’s easy to see how powerful this methodology can be.”

Swan is equally sure that it is a transformational technology, describing it as a “paradigm shift” that delivers “transformational advantages across multiple dimensions. Unlike proprietary systems that lock customers into single-vendor ecosystems, TAMS provides an open framework that enables best-of-breed tool selection across the entire production pipeline, fundamentally changing how organisations approach media infrastructure.”

The technology can also play a significant role in preparing broadcasters for the AI era. “Perhaps most importantly, TAMS creates a foundational layer for AI-powered workflows, enabling real-time analysis and automated content enhancement that simply wasn’t possible with legacy file-based systems, positioning organisations for the next generation of media production capabilities,” says Swan.

Taking TAMS to market

Having recognised the potential of TAMS, AWS formalised the Cloud Native Agile Production (CNAP) programme in early 2024, partnering with the BBC and Sky as anchor customers alongside eight technology partners—including Adobe, Techex and CuttingRoom—to create a comprehensive ecosystem approach. A period of validation and enhancement of the TAMS API specification preceded the industry launch at IBC 2024, where AWS’ TAMS demonstration was presented over 60 times, generating interest from 23 customers and 24 partners, reports Swan.

Subsequently, there has been further work with the BBC to develop the TAMS specification, a series of jointly-run enablement workshops to help attendees “dive deep” with the TAMS API, and then, in October, the first-ever live production use case of TAMS at the global student-led broadcast event, On Air, hosted out of Ravensbourne University in London. The ambitious project brought together over 900 people, including students and industry professionals, to deliver a 24-hour continuous live programme streamed worldwide on YouTube.

John Biltcliffe, a senior solutions architect at AWS, explains how TAMS was used during On Air: “Given that this was the debut live use case, the deployment was carefully scoped to ensure no impact on the broadcast. The core function of TAMS was to perform a continuous single, 24-hour record of the content into the TAMS store, completely replacing traditional video server infrastructure.”

He points to simultaneous access and clipping as the “breakthrough capability” for this project. “As the live broadcast was running, students around the world were able to log into the TAMS UI, view the live ingest, select specific time-addressable sections, and clip/download that content. For instance, one student focused on editing clips for social media, relying on TAMS to scour the stream for content immediately after it happened. The final highlights of the 24-hour broadcast were created using content sourced directly from TAMS, and after the event, all the YouTube deliverables were created from the TAMS recording.”

Reflecting on the demo, Biltcliffe says the key learning was the validated ability of TAMS to enable workflows where live content can be utilised right away during ingestion, regardless of location. “This real-life environment successfully tested and pushed the technology on an ‘epic scale’. The success has reinforced our strategy to adopt TAMS for future live production use cases, validating the model of making content instantly available for repurposing (such as creating social media clicks or highlights packages) during the event itself.”

With AWS and the BBC working towards the management of the TAMS specification being undertaken via an open-source foundation, and demand expected to spread from initial news and sports applications to reality TV, live entertainment and post production, the next few years are looking extremely busy.

Indeed, Swan concludes by offering the following prediction: “By 2027, I expect TAMS-based workflows to become a significant component of new media infrastructure deployments, driven by the compelling combination of cost efficiency, interoperability, and AI-readiness that makes adoption inevitable for organisations seeking competitive advantage.

“The ultimate vision is an industry where content flows seamlessly between organisations, tools and platforms, shifting focus from managing files to creating compelling experiences for audiences worldwide while enabling new forms of collaboration and content monetisation that weren’t previously possible.”

- This article originally featured in the December issue of TVBEurope, available to download here.