In TVBEurope’s August issue, we hear from production agency NOMOBO about their work on Miami’s Ultra Music Festival.

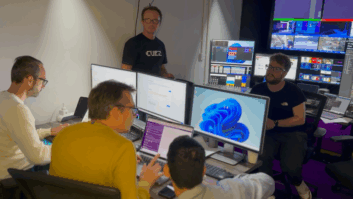

The company used a remote production workflow, with the MCR based in Amsterdam. The MCR has a capacity of nine positions, including livestream/multicam director, assistant director, TD/switcher, engineer in charge, graphics operator, and two EVS operators,.

The facility also includes an audio booth and a tech booth, which is a working space for the encoding engineer and/or digital producer.

Constantijn van Duren, CCO and founder of NOMOBO gives more details about the Netherlands-based operation.

From a connectivity standpoint, what were the key challenges you had to address when developing a backbone for NOMOBO’s remote production capabilities?

Our greatest challenge is ensuring we have the shortest peering from the on-site connections provided – we always require connections from two independent providers – to the backbone we use globally (Google).

How did NOMOBO’s decision to employ the SRT protocol for video encoding address that? And what does that part of the workflow look like in practical terms?

We were early adopters of SRT as it provided the best image and audio quality with the lowest delay. But also working in a multi-camera remote environment, these isolated camera feeds sent from an on-site location to our remote control rooms need to be frame accurate. That is why we have chosen to deploy hardware encoders from Haivision that give us the ability to encode isolated camera signals that are decoded in sync at our MCRs.

How does NOMOBO’s ability to remote monitor/control cameras change with the introduction of remote production?

We have been developing our remote production workflows since 2012 as we have toured with Ultra Music Festival worldwide, producing the Ultra Live broadcasts on YouTube from every continent. It started with a setup of having the multi-camera stage capture entirely on-site and sending over a cleanfeed back to our MCR in Amsterdam to enhance the final broadcast with interview playouts, bumpers leaders, graphics and multiband audio processing.

In 2017 we went a step further with a concert show in Ibiza, where we switched the multi-camera feed of the stage performance from Amsterdam. Due to budgetary constraints, we could only take part of the crew to the island. It turned out we had it under control and started to develop that approach further by replacing protocols such as WebRTC to SRT and beyond.

When Covid impacted our booked events calendar in 2020, we pivoted our remote production capabilities to a model where we deployed camera kits wherever needed; from boardrooms to CEO’s garden sheds, we essentially turned them into small studios. Those kits consisted of Blackmagic Pocket Cinema Cameras and ATEM Mini switchers together with additional hardware and software for audio capture, comms and networking. That allowed us to fully remotely control all aspects of the capture and live broadcast requirements. We developed those kits so a CEO could set it up in minutes without support.

With these developments, we could create the live ‘cinematic’ look of stakeholders presenting on camera virtually from any remote location across the globe. Their keynote presentation was recorded or broadcast live through our remote control rooms in Amsterdam or Los Angeles.

Talk us through your routing and distribution workflow and how/where the Teranex standards converters fit into the mix

As our remote production facilities and control room infrastructure kept growing, we also needed to expand our routing infrastructure. With the massive volume of online events we were producing, a lot of cross-conversion and processing was required, and eventually, we chose to implement the Ross Ultrix platform to take care of that. It is a solution that can grow as you go from HD to 4K, multi-viewing, audio processing, and clean switching without the need to cable our equipment rooms extensively. Teranex AV is our Swiss army knife for transcoding whatever signal is coming in remotely so that we can match those to the MCR broadcast format for a YouTube show.

You had almost near-zero latency from Amsterdam to Miami, with hard cuts for the live mix made in Amsterdam and reflected in the temporary studio back in Miami. Can you expand on that for us?

We can get an SRT delay of 1.2 seconds from Miami to Amsterdam on frame-sync camera feeds. But to ensure the camera operator on-site gets the red/green tally light the moment our director in Amsterdam switches, we synced the ATEM Constellation switchers in Amsterdam and Miami over IP networking. As the ATEM protocol transports tally and shading data, this is the ultimate solution, enabling remote production delivery where operators can control anything wherever they are.

And how important was it to have some of the production capabilities present on-site?

Our approach is that our creative and production leads are always near the client, on-site. But the fewer people who travel, the better, as that helps reduce costs like hotels, flights and catering while also reducing our environmental impact. So per project, we spend time walking through the exact approach of who will be where – with the central question; is this role capable of fully contributing to the production output from our remote facilities?

In addition to the MCR, NOMOBO has created a ‘command centre’ where every facet of the production, local or remote, can be monitored. Tell us about the thinking behind that and what intercom tech you’ve deployed to connect the various to the on-prem studio and local MCR.

We have implemented all key elements of the RTS Odin platform throughout our workflows. It has been such a step forward since we have done so. Now we have IP-connected intercom panels all over the production at every position – for creative, production and client stakeholders on-site to digital producers and encoding engineers monitoring every stream closely from our remote command centre during a live broadcast.

As our digital events consist of many different streams, including multiple languages, sign language and closed-captioning, we centralise this and take that off-site. But with so many output streams come many paths to monitor and QC (quality control). And that is where the command centre team back in Amsterdam is employed. If there is an issue, that team can immediately step in and update our client on what is happening and what contingency plans we execute to solve the problem.

NOMOBO has also implemented Dante for audio over IP. Can you walk us through that decision and its benefits?

Using Dante Connect for high-quality audio IP streams at the lowest delay allows us to remotely monitor audio mixing boards on-site at several stages within a project like Ultra Music Festival. PFL routing is streamed through Dante Connect as well as the ATEMs PGM outputs, so the Audio Engineer fully controls the mixer and works on it from Amsterdam in our soundproof and optimised mixing environment – instead of a Portakabin office with terrible acoustics and surrounded by stages with sound bleed.