An add-on accessory lens that could allow ordinary cameras to do 3D, high dynamic range and light field manipulation (allowing focus changes in post) has been developed by university researchers and is being revealed at the SIGGRAPH [http://s2013.siggraph.org/] conference and exhibition that is taking place in Anaheim, California, this week.

A team, lead by Alkhazur Manakov, from Saarland University in Saarbrücken, Germany, the Max-Planck-Institut für Informatik, and Delft University of Technology in the Netherlands, is presenting a paper called: “A Reconfigurable Camera Add-On for High-Dynamic-Range, Multi-Spectral, Polarization, and Light-Field Imaging.”

The initial KaleidoCamera system has been designed to fit between the lens and the camera body of a DSLR, but the technology is adaptable and could have wider implications.

The design is similar to a kaleidoscope, creating multiple copies (nine in the prototype) of each beam of light, which then pass through different optical filters, delivering them to the sensor as a grid of separate images, which can then be manipulated in post to give the desired final image.

“A minor modification of the design also allows for aperture sub-sampling and, hence, light-field imaging. As the filters in our design are exchangeable, a reconfiguration for different imaging purposes is possible. We show in a prototype setup that high dynamic range, multispectral, polarisation, and light-field imaging can be achieved,” explained the paper (which is downloadable as a pdf – http://resources.mpi-inf.mpg.de/KaleidoCam/files/KaleidoCamera-Manakov2013.pdf).

The images from the prototype are lower resolution than you would normally see with such cameras, although this will improve with development. But as DSLRs have so much higher resolution than HD, it shouldn’t be too difficult to get good video images from the system. Indeed, the team, in their video [http://resources.mpi-inf.mpg.de/KaleidoCam/files/KaleidoCamera-Manakov2013.mp4] say that “our system, when configured for light field imaging, generates very high spatial resolution light field views of almost full HD resolution.” Even if the system is only used on a DSLR, a useful application would be for timelapse images, which have to cope with wildly varying lighting conditions, where it would be possible to extract perfectly exposed or focussed images. At the moment, because the way the system is designed, the HDR and light field implementations are separate (using swappable components).

Depth of field

Light field images have most notably been used by the tiny Lytro camera (which uses multiple micro lenses), and allow users to change the focus after capturing the image. There has been a lot of interest in using the technology on mobile phone cameras, with a new start-up, Pelican (with Nokia backing) and Toshiba looking to enter the fray. In broadcasting, it would be very useful for observational documentaries that want to have a shallow depth-of-field look but also want anything of interest to be in focus, as it is infinitely tweakable in post. The KaleidoCamera can potentially give even shallower depth of field than almost any other lens (the equivalent of f/0.7), thanks to the algorithms it uses, which allow it to extrapolate beyond the aperture of the physical lens, so that it can even give the impression of macro photography in a normal scene.

How it works

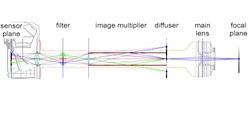

In HDR or multispectral modes, the main lens casts its image onto the plane normally occupied by the camera’s image sensor, but instead there is a diffuser that “functions as a back-projection screen and enables physical image copying avoiding parallax,” according to the video.

“The screen is observed through a kaleidoscope-like arrangement of mirrors that physically copies the image. The copies can then be differently filtered and finally cast to the camera sensor with a one-to-one imaging system.” For HDR the KaleidoCamera is fitted with interchangeable neutral density filters.

In light field mode, the diffuser is replaced with a pupil-matching lens. The mirrors then create multiple sub views, each with a different perspective shift.

It is even possible to virtually change the viewpoint, to create camera movements. This enables it to generate 3D. “The baseline between the two views can be continuously adjusted, increasing the stereo perception. We also virtually move the plane of convergence for comfortable stereo depiction,” explained the video. Stereo can also be combined with changing both the viewing position and the field of view.

As it is a virtual lens, it can also have any shape, allowing users to create different bokeh (out of focus blur) effects.

The multispectral effects might be of more interest to a researcher than a broadcaster, but the right selection of coloured filters could make it easier for colour graders to match different lighting conditions, or cope with the problems of cheap LED lighting. More likely applications for this technology would be for use with computer vision (allowing a computer to spot which fruit is ripe and which is rotten, or to differentiate between real and artificial flowers).

By David Fox