Reflecting on the NAB show this year, I was struck by two extremes: emerging technology that’s still fringe like 8K video, and dying technology that’s still in use such as FTP. The media industry has long pushed technology forward, yet it’s surprising how much old technology still lingers in the industry’s infrastructure, sometimes even within the same company. NAB is a place where old meets new, often head on.

Moving to 8K capture

At this point, the move to 8K capture seems inevitable. Several prominent leaders in the 8K ecosystem showed off new cameras in Las Vegas, including NHK, Sony, Sharp and Ikegami, even as 8K broadcasts are rare and consumer 8K displays almost nonexistent.

However, one 8K broadcast earlier this year is getting a lot of attention. The Winter Games in PyeongChang was viewed by people in a special theatre in the International Broadcast Center and some private screens in Japan. It wasn’t so much the number of people who experienced 8K but the demonstration of its possibility at such a prominent, global sports event.

Japanese broadcaster NHK piloted a single 8K camera at the Rio Summer Games, but the 8K UHD coverage in PyeongChang pushed the coverage to 10 8K cameras (from Sony, Ikegami and Astro) transmitting eight hours per day and totally some 90 hours of 8K content. NHK also covered the Paralympics in 8K for the first time.

The success of 8K at PyeongChang has encouraged other host broadcasters to jump in the feed, with several countries planning live viewings of the Tokyo 2020 Winter Games in cinemas and other large venues.

If we are witnessing another leap in image resolution standards as we have in the past, from SD to 2K to 4K, then the file sizes moving over IP networks throughout production, post production and distribution are about to get much bigger.

How big is an 8K file?

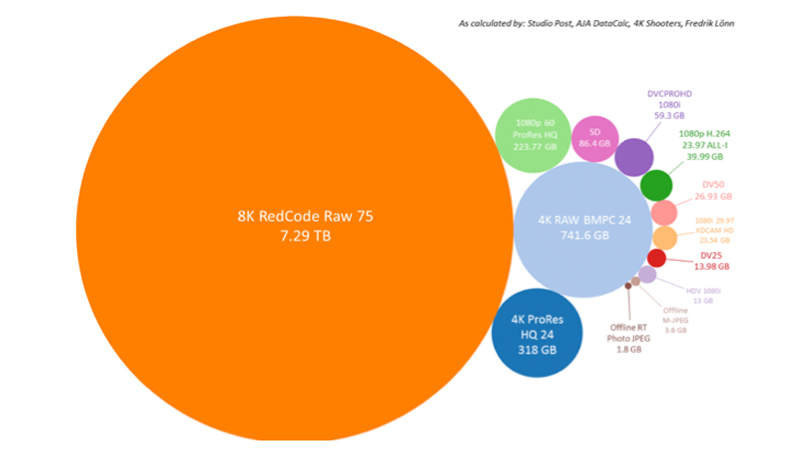

Here’s a rough approximation (each circle represents one hour of video for the specified format).

Such huge files produced by 8K capture will continue to push the industry to innovate in other technical areas. And intelligent file acceleration solutions which can transfer any size file with speed, reliability and security, will become even more critical.

Moving away from FTP

On the other extreme is FTP. FTP is one of the original members of the internet protocol suite and has been used to transfer files since the 1970s, well before the internet was a world wide web of connected machines responding to the whole of humanity’s whims.

Back when the media industry transitioned from tape to file-based workflows, FTP was the obvious first choice to transfer files over IP networks and many small media companies still rely on it today.

But most of the industry has moved away from FTP for their primary workflows. FTP struggles with the large files and long distances that have been common in media production for the last decade, and most media companies now use accelerated file transfer software to move files.

However, during my many conversations at NAB, I was surprised to realise how many enterprises still use FTP for a variety of use cases. While many companies actual prohibit the use of FTP, some allow it to persist in seemingly benign workflows.

Is FTP benign?

The enterprises that banned FTP did so because they could no longer keep it secure. Without support for modern encryption techniques, FTP has become a favorite target for malicious hackers, bots and malware distributors. That’s why most major browsers including Chrome, Safari and Firefox have begun blocking FTP subresources from loading inside HTTP and HTTPS pages or labeling FTP sites as insecure.

My alarm at discovering so many companies still use FTP is shared by cybersecurity experts. A recent research report by Digital Shadows examining the most common file sharing services across the Internet found over 12 petabytes of data exposed and completely open for the taking around the world, most of it in the United States and 26 percent of it from FTP. So those companies are far from alone.

The report also stated that sensitive data is the biggest cause for concern, including personal information, intellectual property and security assessments.

Additionally, third parties and contractors were found to be among the most common sources of sensitive data exposures. These third parties are not people trying to do companies harm, they are just using FTP to do the work they were hired to do. Those projects enterprises are using FTP for are likely creating a huge security hole in their company.

Many business leaders are already taking security way more seriously than they did even five years ago, educating themselves in areas that were once the domain of their IT department. However, business leaders are going to have to look beyond the boundaries of their own company.

As we see more security breaches this year, with FTP a likely cause, the industry will have to become more collaborative in its security practices and recognize the need for security across their entire media content supply chain. New standards such as DPP’s ‘Committed to Security’ mark are a good place to start.

People love Media Shuttle

For companies wrestling with either or both extremes of large file transfer and security challenge, one thing stood out. People really love Media Shuttle, Signiant’s SaaS accelerated file transfer solution. Security has long been at the core of Signiant’s expertise, and people recognise that more and more. But they especially seem to love the user-centric care we’ve put into the design of Shuttle.

As files continue to get bigger, workflows more complex and security threats more real than ever, that’s where Signiant shines. And, while we all left Vegas exhausted from another busy NAB, we were also more energised than ever to help companies transition from old to new.