Amazon Web Services (AWS) has released a Live Streaming with Automated Multi-Language Subtitling solution for automatically generating multi-language subtitles for live streaming in real time.

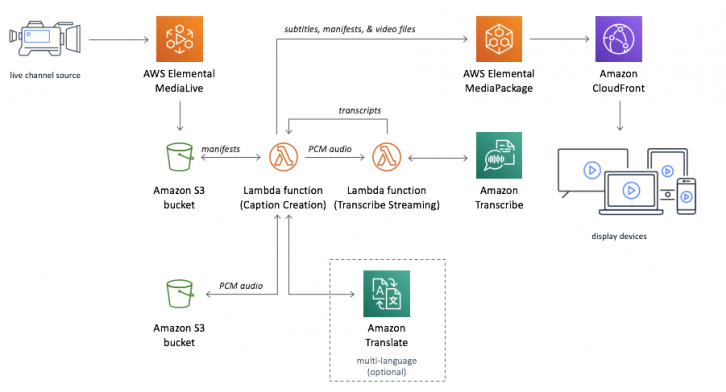

AWS Live Streaming encodes and packages content for adaptive bitrates across multiple screens, then converts the audio to text using AWS Lambda, Amazon Transcribe and Amazon Translate.

The solution can be used out-of-the-box, customised for specific use cases, or paired with AWS Partner Network (APN) to implement an end-to-end subtitling workflow.

Subtitle generation starts when MediaLive output is sent to the solution’s Amazon S3 bucket. The CaptionCreation Lambda function takes the manifest files from the bucket, extracts unsigned pulse-code module (PCM) audio from the TS video segments, and saves the PCM audio to Amazon S3.

The TranscribeStreaming function then converts the audio stream to text in real time, before sending the transcript back to CaptionCreation. If multiple languages are required, the CaptionCreation function calls Amazon Translate to translate the transcript.

MediaPackage ingests the files and packages them into formats that are delivered to four MediaPackage custom endpoints.