3D hasn’t died – it’s merely resting. Or at least waiting for the day decent quality glasses-free displays arrive. With a new approach to the problem, Disney’s Zurich R&D team has devised a hardware prototype which is able to convert stereoscopic 3D video into content appropriate for multiview autostereoscopic displays in real time.

“Today, most commercially available 3D display systems require the viewers to wear some sort of shutter or polarisation glasses which is inconvenient,” explained the group’s digital circuit and systems expert Michael Schaffner. “On the other hand it is difficult to create content for multiview autostereo displays.

Moreover, storage and transmission of HD content with more than two views is costly and even unfeasible in some cases. In order to bridge this content-display gap, so-called multiview synthesis (MVS) methods have been developed over the past couple of years, which are able to generate several virtual views from a small set of input views.”

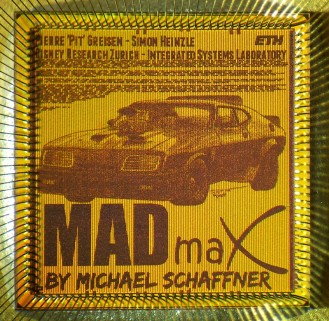

The Disney team devised a complete MVS pipeline, which is able to synthesise content for an eight-view full-HD display from full-HD stereo 3D input at 30fps. “Our hybrid FPGA/ASIC prototype is the first complete realtime system that is entirely implemented in hardware,” said Schaffner.

The prototype uses a conversion concept developed at Disney Research, which is based on image domain warping (IDW). This development paves the way for a completely integrated ‘system on a chip’ (SoC) for TVs and mobile devices where it would serve as a power-efficient hardware accelerator.

“We estimate that our IP would consume less than 2W and occupy around 9mm2 of silicon area in 28nm CMOS technology,” added Schaffner.