The prominence of 360-degree video is increasing, with users at all levels from consumer to film studios, and with stitching of video output from multiple sensors a key process.

Until now there has been a choice between speed and quality. Post tools can produce excellent results, but at the cost of time and effort of skilled operators, while software-based real-time stitching techniques typically achieve a more

basic result.

Argon360 is claimed by its developer to offer high quality stitching in real-time, using custom-designed hardware logic in a chip with low power consumption.

Video from a VR rig’s individual sensors is passed to image signal processing blocks then stitched into a single output by

Argon360, and further processed on the chip for viewing, streaming or storage. The patent pending technology is available as an IP core building block for incorporation into an ASIC, or available for an FPGA implementation, suitable for lower volume products.

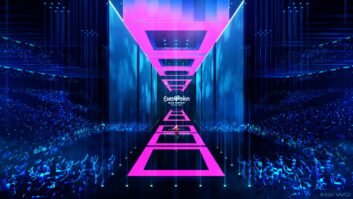

The results can be viewed in the IBC Future Zone on a spherical interactive display from Pufferfish.

Pufferfish was formed by two undergraduates at the University of Edinburgh who used their own resources to develop the ideas for commercial products. The innovation has been used at a wide variety of events and installations including at museums, for rock concerts,

and for presentation as part of a TV news studio.

8.G14